The AI and LLM industry is changing and developing rapidly. How will the industry move forward, become regulated and adjust to this new reality of words as a commodity? We’re starting a new chapter of data commodification through access to and the commodification of words. In a yet unregulated space, product teams need to self-regulate while government regulation lags behind.

Culture as an industry is nothing new. Neither is the notion that data is ‘the new oil’. With the popularity of AI chatbots and large language models, we’re starting a new chapter of it through access to and the commodification of words.

Words have become another type of data commodity that is extracted for profit. One of the problems this creates is that words are being commodified without the consent of users or their platforms. So, what will this mean for the large language model (LLM) industry?

Commodifying language in this way is new and needs discussion more so than whether chat-based platforms are good at producing accurate semantics, syntax and whether the language ‘feels human’. If companies commodify decades of conversations in online forums without users’ informed consent for the benefit of private companies, then we’re opening another can of worms around AI ethics, consent, privacy, and data.

I don’t want to talk about semantics, syntax, or anything specifically linguistic. Let’s instead talk about the emerging problem of language as data and the economy around access to it and what I think will be industry trends that we’ll observe.

Language is another human attribute to become a digital data commodity

Ownership over data is nothing new on the internet, but ownership of words — big, big volumes of words like on Wikipedia and Reddit — has become valuable.

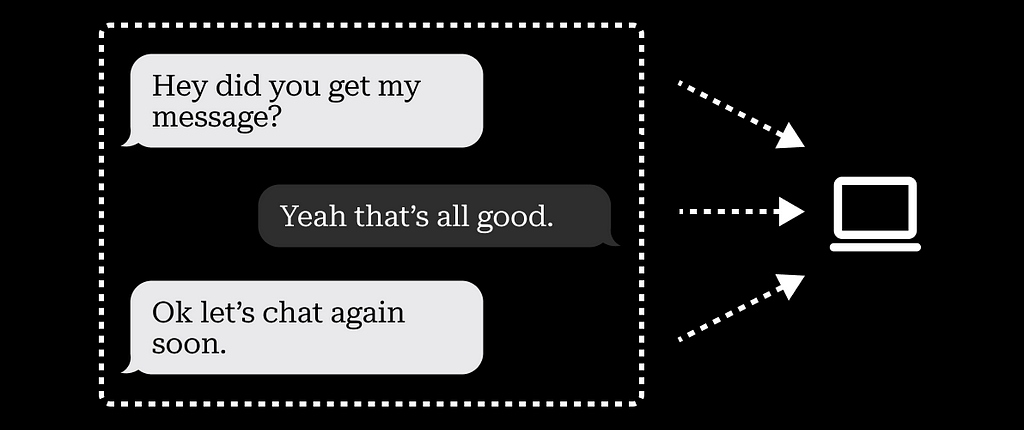

Social relationships (specifically, the data around social interactions) became a digital commodity with the advent of Facebook, Twitter, Instagram, and others. Social media commoditized social relationships through the attention these platforms got from their users (known as the attention economy), which can be commoditized and sold. It now feels like we’re going away from social relationships and attention as valuable, and are now also adding words. The words people have generated on the internet have unwillingly become a new resource.

What’s new is the use case for and the types of data companies pull from their users. It’s no longer (only) sold to advertisers, it’s also sold/bought/used to actually create the technology. It’s no longer (only) our attention that’s being sold, but the actual words/thoughts — content — that users humans create. People writing on the internet are now providing free labor to feed a new technology which is then sold back to us.

This means that:

1. More organizations will start charging for access to the words on their platforms

2. Engineering teams will start looking elsewhere for free big data

3. Most users will be completely unaware that this is happening (and won’t provide informed consent)

4. Engineering teams will keep pulling free data until regulators step in

5. Big data companies will start changing their Ts&Cs to enable access to words for LLM training purposes

6. Public awareness about the risks of AI and government regulation will lag behind for years

What industry trends will we see?

Words as a Service (WaaS): charging for access to words

Access to words is going to become increasingly political and economic, and access to those may start to be regulated or restricted. Reddit has already begun to charge for access to their application programming interfaces (API) that are used to train LLMs. Teams will begin to look elsewhere or begin paying for this, and we may see an economy emerge around this.

Privacy: private LLMs for niche use cases and privacy-minded users

Smaller organizations and individuals may also want to train LLMs with data sets they own but don’t want it to be public. One use case for this is research (sorting and finding patterns in large amounts of text) and being able to process (e.g. translate) non-public information. The assumption is that this would be safe and private, like Signal but for LLMs. This seems quite risky from today’s perspective, I don’t think many researchers or scientists would trust this, so it’s probably a while in the future that we’ll see this in a way that’s safe and trusted. There is a fair bit of policy work and privacy implementation that needs to happen for this to be adopted.

Personalisation: Tone and voice adapted to big organizations

Big organizations that hold data of conversations and text, like chatbots, forums or messaging (think of any large organization that has millions of customer support interactions stored), may look to train LLMs with internal data such as from customer support interactions of their staff or advertising and marketing material created over the years. Part of the importance here is that they use material that employees of the company created, as opposed to their customers. This means that they can develop their own tone and voice and build more specialized chatbots specifically for their service.

The AI and LLM industry is changing and developing rapidly. How will the industry move forward, become regulated and adjust to this new reality of words as a commodity?

My opinion is that product teams need to self-regulate while government regulation lags behind. Niche use cases, as well as products that solve user problems around privacy, tone, and ethical data access, will prevail over those that exploit anyone who writes on the internet for the words they’ve written.

Additional resources

IDEO’s tool for ethical AI

The WIRED Guide to Artificial Intelligence

Many thanks to Tony Salvador for reading and helping to improve an earlier draft of this article.

Words: the new data commodity was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.