Intel RealSense technology pairs a 3D camera and microphone array with an SDK that allows you to implement gesture tracking, 3D scanning, facial expression analysis, voice recognition, and more. In this article, I’ll look at what this means for games, and explain how you can get started using it as a game developer.

What is Intel RealSense?

RealSense is designed around three different peripherals, each containing a 3D camera. Two are intended for use in tablets and other mobile devices; the third—the front-facing F200—is intended for use in notebooks and desktops. I’ll focus on the latter in this article.

The F200 is already included in a number of different notebooks, as well as a couple of other devices, and will soon be available as a stand-alone USB peripheral. (You can already order or reserve a dev kit version for around $100.)

It consists of:

- A conventional color camera (1080p, 30fps)

- An infrared laser projector and camera (640×480, 60fps)

- A microphone array (with the ability to locate sound sources in space and do background noise cancellation)

The infrared projector and camera can retrieve depth information to create an internal 3D model of whatever the camera is pointed at; the color information from the conventional camera can then be used to color this model in.

The SDK then makes it simpler to use the capabilities of the camera in games and other projects. It includes libraries for:

- Hand, finger, head, and face tracking

- Facial expression and gesture analysis

- Voice recognition and speech synthesis

- Augmented reality

- 3D object and head scanning

- Automated background removal

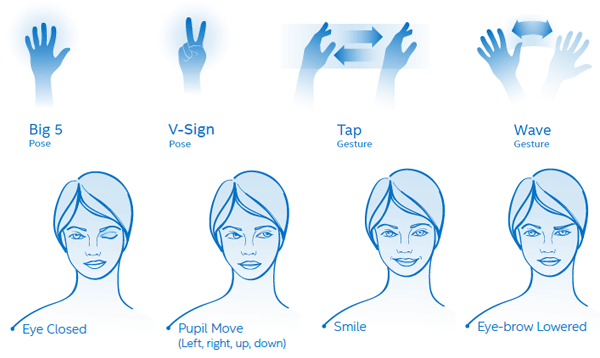

Note that, as well as allowing you to track, say, the position of someone’s nose or the tip of their right index finger in 3D space, RealSense can also detect several built-in gestures and expressions, like these:

What RealSense Brings to Games

Here are a few examples of how RealSense can be (and is being) used in games:

Nevermind, a psychological horror game, uses RealSense for biofeedback: it measures the player’s heart rate using the 3D camera, and then reacts to the player’s level of fear. If you lose your cool, the game gets harder!

MineScan, by voidALPHA, is a proof-of-concept that lets you scan real-world objects (like stuffed animals) into Minecraft. Any 3D PC game with an emphasis on mods or personalization could use the RealSense camera’s scanning capabilities to let players insert their own objects (or even themselves!) into the game.

Faceshift uses RealSense for motion capturing faces in detail. This technology could be used in real-time, within a game, whenever players talk to one another, or during production time to record an actor’s expressions as well as their voice for more realistic characters.

There Came an Echo is a tactical RTS that uses RealSense’s voice recognition capabilities to let the player command their squad. It’s easy to see how this could be adapted to, for instance, a team-based FPS.

Years ago, Johnny Lee explained how to (mis-)use a Wii controller and sensor bar to track the player’s head position and adjust the in-game view accordingly. Few games, if any, actually made use of this (no doubt because of the unorthodox setup it required)—but RealSense’s head and face tracking capabilities makes this possible, and much simpler.

There are also several games already using RealSense to power their gesture-based controls:

Laserlife, a sci-fi exploration game from the studio behind the BIT.TRIP series.

Head of the Order, a tournament-style fighting game set in a fantasy world, where players use hand gestures to cast spells at each other.

Space Between, in which you use swimming hand motions to guide turtles, fish, and other sea creatures through a series of tasks in an underwater setting.

Madagascar Move It!, a kids game similar to the Let’s Dance series.

Gesture controls aren’t exactly new to gaming, but previously they’ve been almost exclusive to Kinect. Now, they can be used in PC games—that means Steam, and even the web platform.

How to Use RealSense as a Game Developer

First step: download the SDK. (Well, OK, the first step is probably to get a device with a RealSense camera or reserve a dev kit.)

The SDK contains:

- Libraries and interfaces for Java, Processing, C++, C#, and JavaScript

- A Unity Toolkit with scripts and prefabs

- Code samples and demos

- Documentation

Next, take a look at the Intel RealSense SDK Training site. Here, you’ll find guides to get you started, tutorials to guide you through using certain features (including the Unity Toolkit), and videos of previous webinars. We’ll also be publishing RealSense tutorials on Tuts+ over the next few weeks.

Intel’s YouTube channel has a great playlist of videos about developing for RealSense. These have a much greater focus on UX and UI than the tutorials above; watch this video for an example:

These UX Guidelines (PDF) are a great accompaniment to the above videos.

Once you’ve got a good overview of what the SDK can do and how the various libraries work, dive in to the documentation for detail.

Finally, check out the official forums to chat with other developers, see what they’re working on, and get advice.

Conclusion

We’ve covered what RealSense is, what game developers are using it for, and how you can get started using it in your own games. Keep an eye on the Tuts+ Game Development section over the next few weeks for some tutorials on head scanning, keyboard-free typing, and expression recognition.

The Intel® Software Innovator program supports innovative independent developers who display an ability to create and demonstrate forward-looking projects. Innovators take advantage of speakership and demo opportunities at industry events and developer gatherings.

Intel® Developer Zone offers tools and how-to information for cross-platform app development, platform and technology information, code samples, and peer expertise to help developers innovate and succeed. Join our communities for the Internet of Things, Android*, Intel RealSense Technology, Modern Code, Game Dev and Windows* to download tools, access dev kits, share ideas with like-minded developers, and participate in hackathons, contests, roadshows, and local events.