But what does this all mean, practically speaking? Does any of it actually work? And if so, how effective is it? Is it anything more than a fun toy to play around with?

I’ll venture to answer these questions and more as I put the Advanced Data Analysis tool in ChatGPT to the test.

What is Advanced Data Analysis Capable Of?

Before I dive into the testing, let’s take a moment to discuss what this feature is supposed to be able to do.

-

Analyze Structured Data: It can interpret and process structured data files like CSVs to provide insights and summaries.

-

Visualize Data: Create various types of graphs such as bar charts, scatter plots, heatmaps, line graphs, pie charts, histograms, box plots, and area charts.

-

Mathematical Problem-Solving: Solve complex mathematical problems and interpret equations from text or images.

-

Image Recognition: Understand and analyze images, read text and symbols within them, and classify objects or extract data.

-

Code Interpretation: Generate and run Python code based on user prompts.

- Generate Code from Screenshots: Produce code from images like screenshots.

Testing Data Analysis, Manipulation, and Visualization

To begin, I wanted to see how ChatGPT could handle data manipulation. The feature is called Advanced Data Analysis, after all.

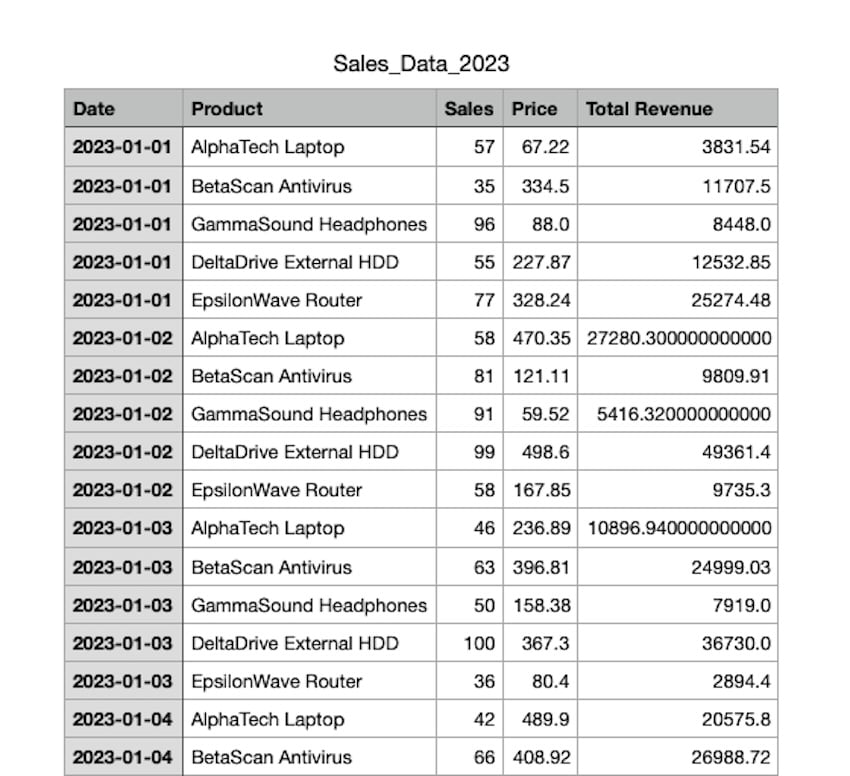

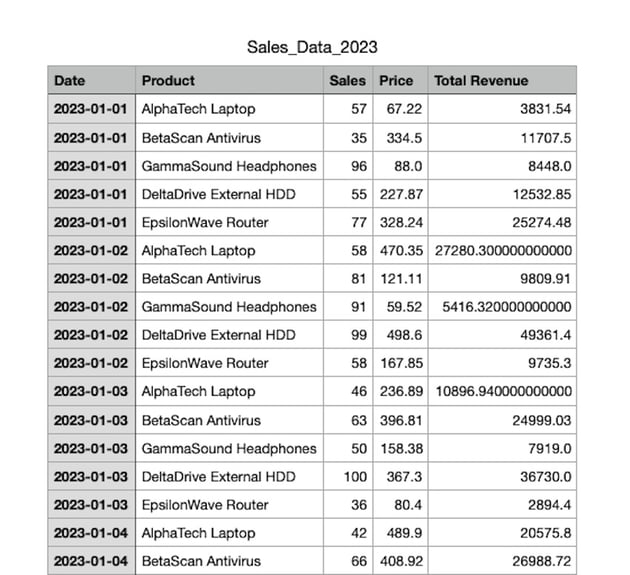

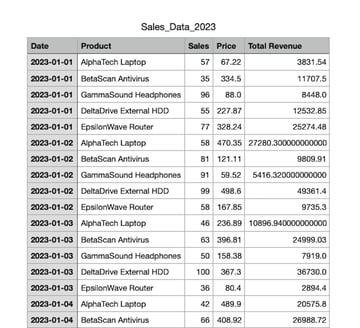

So, I asked it to generate fake sales data for the year 2023 for five different fictional products.

ChatGPT executed a Python script that created a CSV file with randomly generated sales numbers, prices, and total revenues for each day of the year for each product.

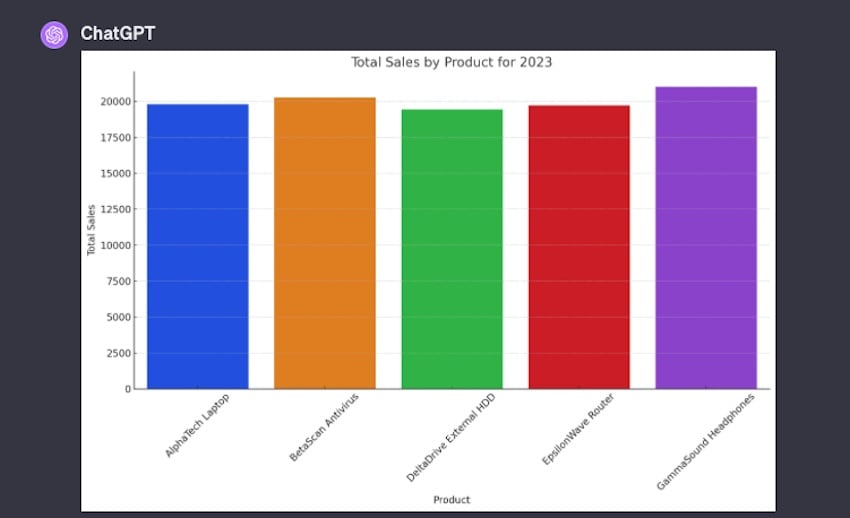

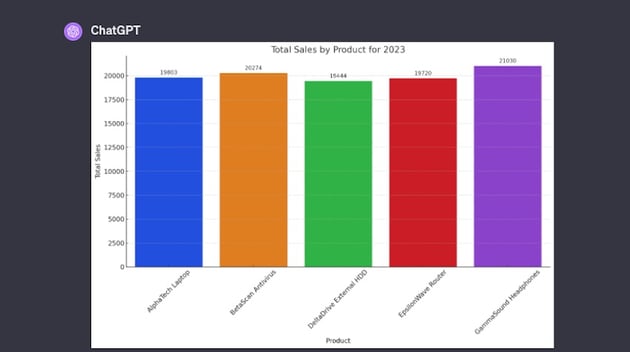

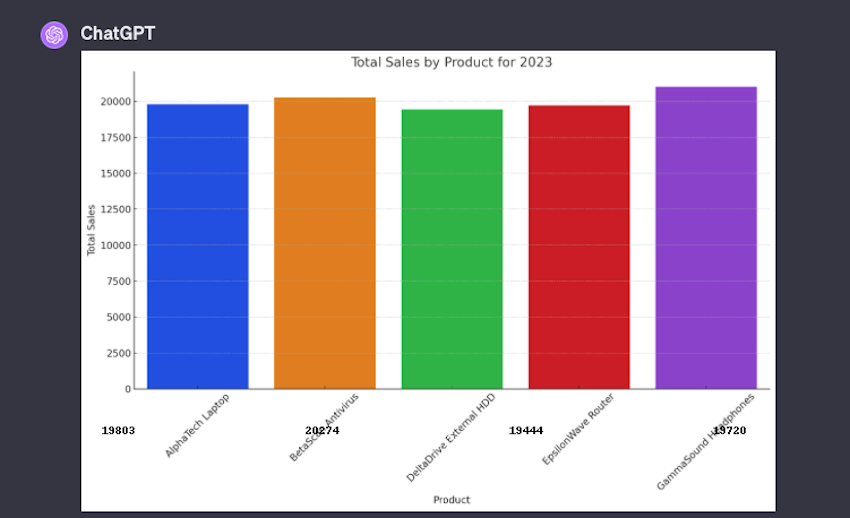

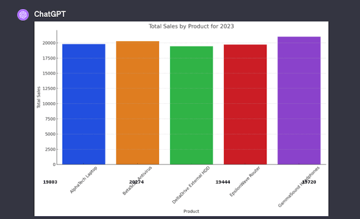

Once the data was generated, I requested that ChatGPT create a graph from this data, representing each product with a different color.

ChatGPT then produced a bar graph illustrating the total sales for each product throughout the year.

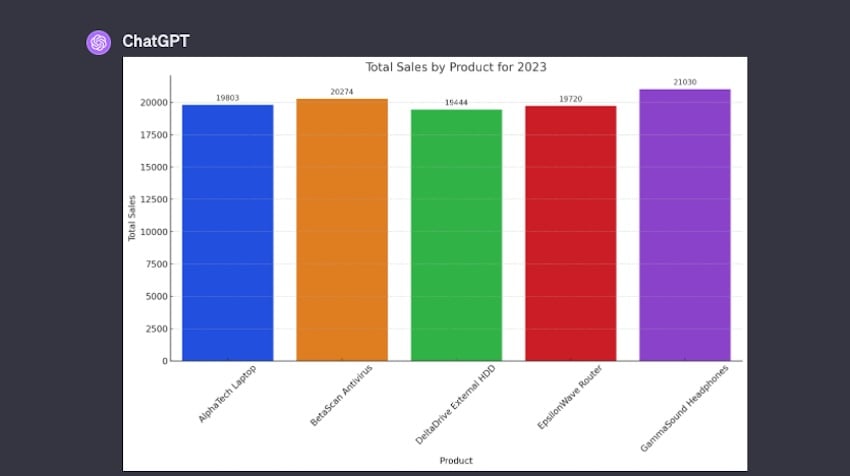

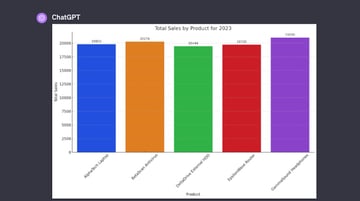

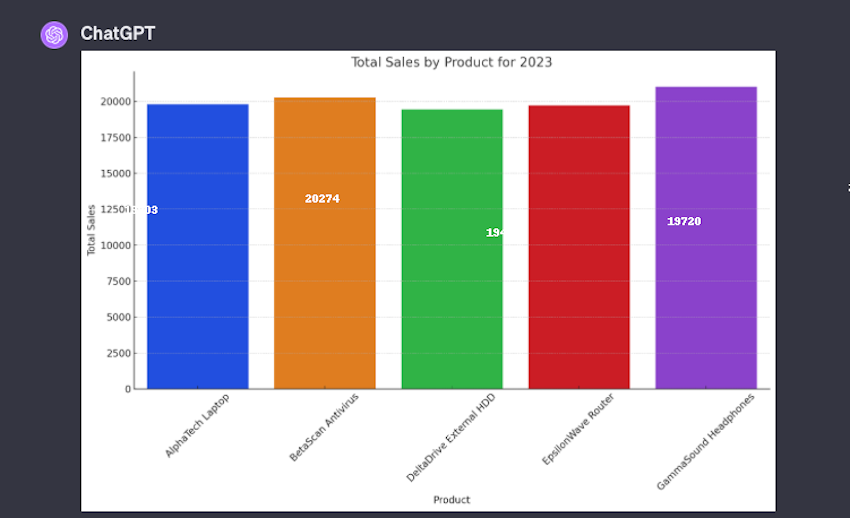

Noticing that the graph lacked clarity in conveying information, I asked ChatGPT to annotate the graph with the total sales numbers for each product. ChatGPT edited the graph accordingly, placing the numbers above each bar for easy reading. But it didn’t place the sales figures where I wanted:

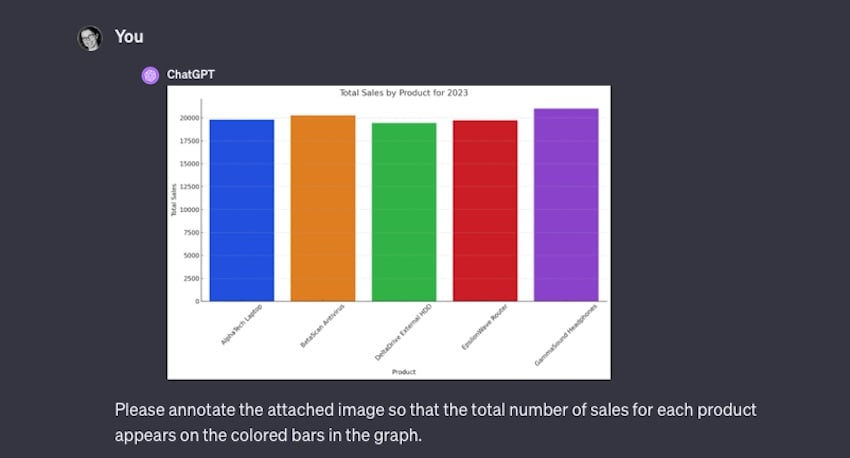

So I uploaded an image of the output graph and repeated my request, asking that it include the sales information on the color bars in the graph.

Unfortunately, it had some issues and the output was illegible. It was sort of on the right track, but the placement was wonky, to say the least:

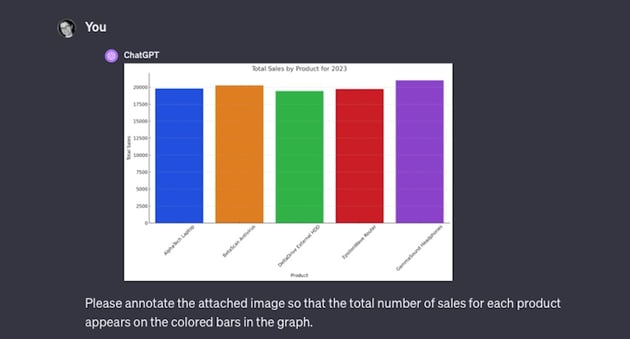

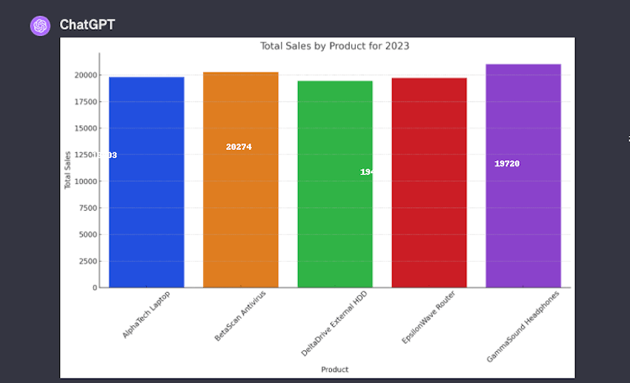

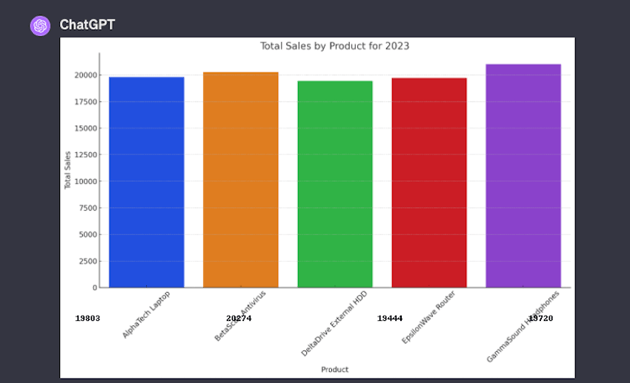

So, I asked for revisions to correct the text color and placement:

In this amended output, the text placement was still way off but it did change the text color as requested:

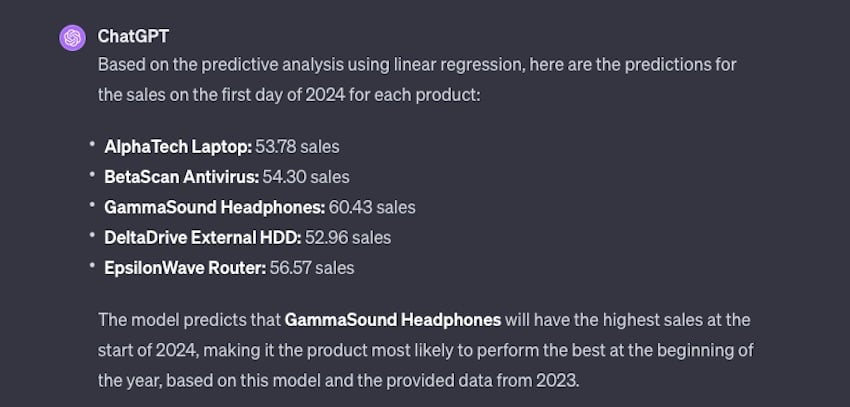

Sensing I had hit an impasse with this approach, I decided to test ChatGPT’s ability to conduct predictive analysis. I prompted it with a simple request: Which product is most likely to perform the best in 2024?

The output was straightforward:

I could see how if I’d provided it with more varied data, the output would likely be more helpful and offer real insights to potential trends. But this does give an idea of what’s possible here.

After that, I asked it to create an image based on the sales data.

I gave it several specifications for color, placement, and what the imagery should contain. And the output wasn’t terrible for a first attempt:

However, it had six products shown instead of five and the sales figures were all incorrect. The color scheme was a bit off, too, but were in the right general vicinity of what I’d asked for.

I asked for revisions, but it couldn’t do that so it output a new image instead.

Unfortunately, this output really wasn’t any better. There are definite limitations to what ChatGPT can do. The Advanced Data Analysis features are functional when dealing with datasets and general graphical output. I could see this being super helpful when trying to organize a product catalog, sales figures, or trends but it falls apart when you ask anything super specific of it.

Testing Web Design and Coding Output

I also wanted to test ChatGPT’s ability to analyze and output code.

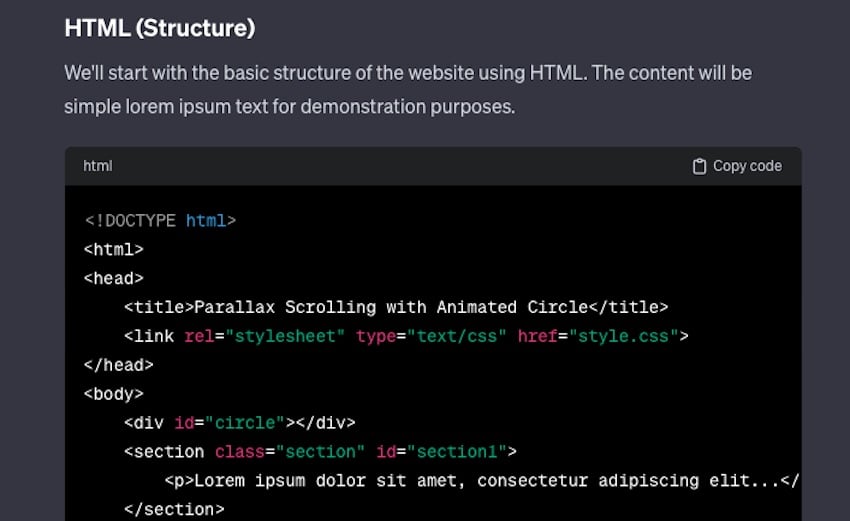

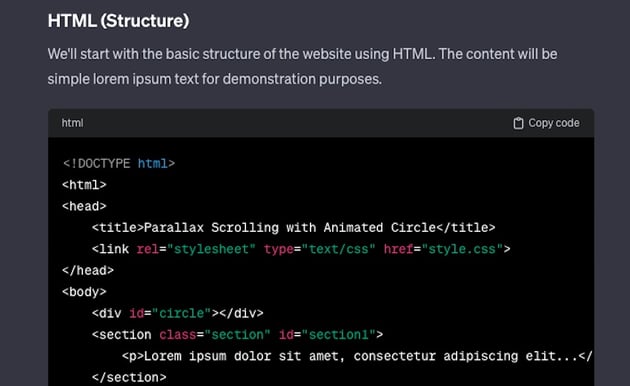

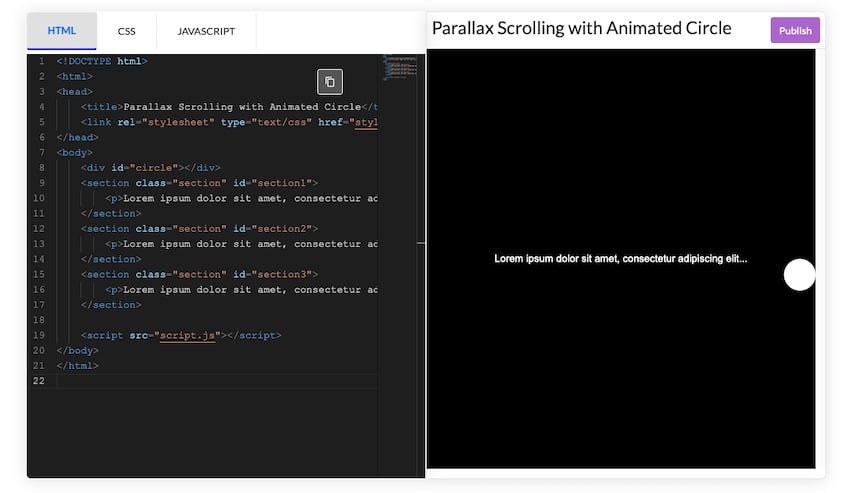

I began by asking ChatGPT with creating a simple website featuring specific design elements: a black background, white text, parallax scrolling, and an animation of a circle that moves across the screen from right to left as you scroll.

ChatGPT provided comprehensive code snippets for HTML, CSS, and JavaScript that would achieve the desired effects, explaining the function of each code block and how they work together to create the website’s functionality and style.

It all looked good to me in the code snippets but I, of course, had to put it to the test. So I input these code snippets into the tiiny.host HTML tester and it actually worked.

Unfortunately, when I tried to publish the sample site, the code broke. So, I tested it in CodePen and got much better results:

It’s not pretty, but it definitely followed the prompt I provided.

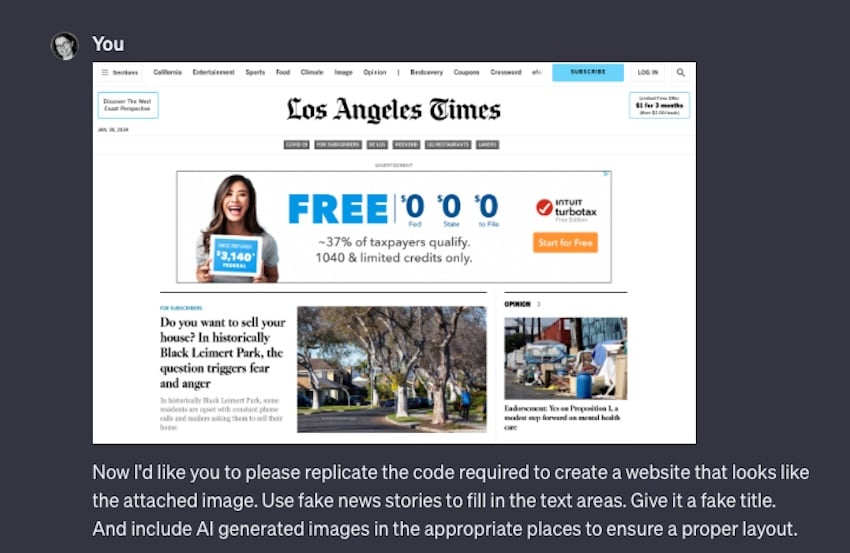

Next, I wanted to test to see if ChatGPT could view an image of a website and replicate its code based on appearance alone. I kept my prompt pretty vague. I really wanted to see what it might do with this information.

ChatGPT carried out several processes in its response. First, it generated the HTML and CSS for this site. But the code was super bare bones so I had to intervene and prompt it to be more detailed right from the outset. Once that was fixed, it finished generated the code and then created two AI images to use as placeholders.

It even told me the prompts it used to generate the images.

ChatGPT then automatically went back and altered the HTML to reflect the image names. Of course, when I downloaded these AI images, neither had the file names it inserted into the HTML. But I digress.

I input the code provided into CodePen. I then uploaded the AI images to external hosting and inserted the URLs into the appropriate places within the HTML. I did have to make adjustments and add sizing to the images manually.

The resulting site is pretty barebones. But since I use the LA Times as a source image, that’s understandable. And I’m sure if I continued to alter the prompt, I’d get more details like dividers, columns, and ad placeholders.

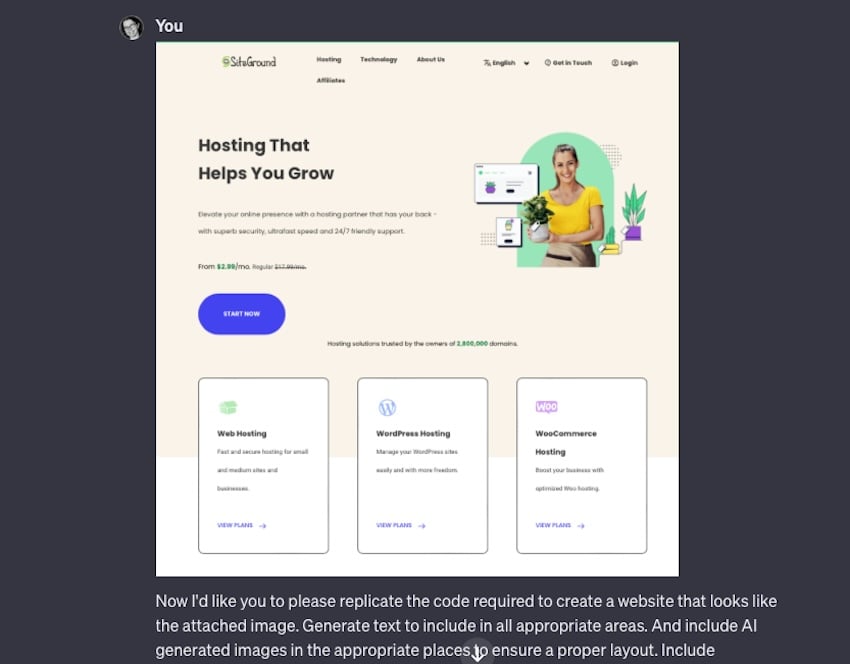

Nevertheless, I wanted to try one more time with a more complex-looking site to put this tool through its paces.

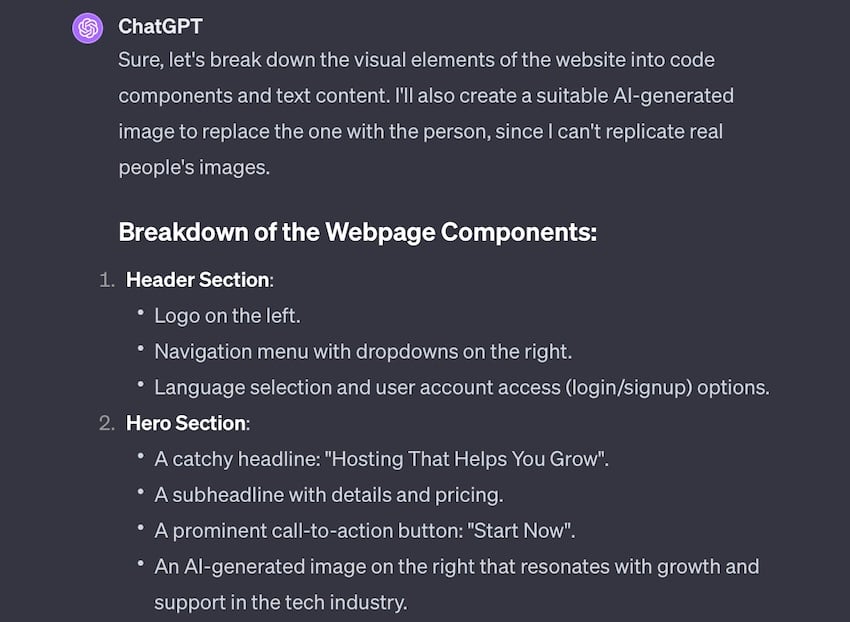

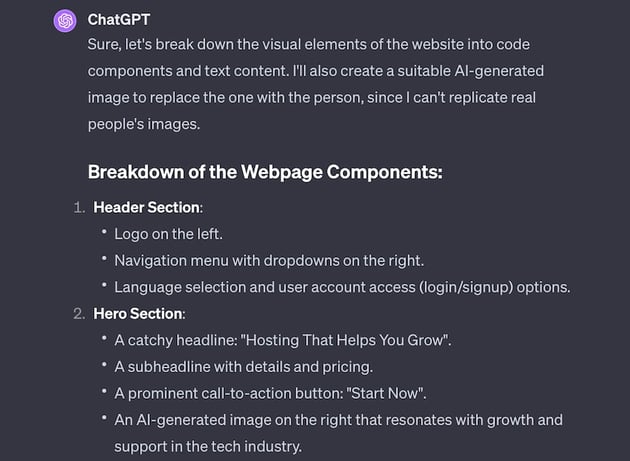

The last thing I asked ChatGPT to do is to recreate the design of the SiteGround homepage.

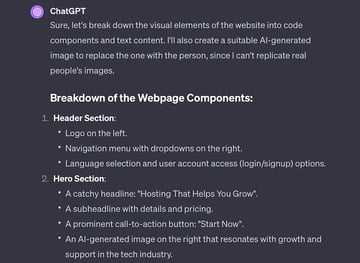

This time, it output a lengthy description of its analysis of the image. It was able to read all the text within the image and identify where the navigation, logo, page links, and CTA button should be.

Then it generated an image to use and create the site’s code. It lumped the HTML and CSS together, and omitted any styling info, unfortunately. So I prompted it to split the code into usable sections and to add styling. I provided a color scheme.

The next output was great but was filled with placeholders and instructions to “repeat text here..” and such, so I prompted ChatGPT one more time to flesh out the code so that it would be usable immediately.

There was a bit more back-and-forth, because it omitted the dropdown menu I asked for entirely, but after a two more tries, it got a general homepage design. Lastly, I asked it to create a logo for this mockup, and this was the final result:

Is Advanced Data Analysis Worth It?

If you know how to code already, I can’t imagine that this approach would save you any time building a website. There’s still a lot of back and forth required to get anything close to usable and the output, even then, isn’t spectacular or live site ready.

That being said, if you don’t know how to code, this does work in creating accurate code that loads properly and is usable. With more refinement, I can see how you could land on an output that serves your purposes. This would be especially useful if you have a lot of reference images and/or a clear idea of what you want, including fonts, color schemes, and images ready to go.

Now for analyzing data, this is quite effective. And though it’s not a replacement for a human evaluating data, it could certainly service as a good way to streamline data organization and the creation of data visualization. I’d say leave the interpretation up to the humans, but putting info in a more usable order? Yes, ChatGPT Plus might be worth its price tag for that feature alone.